How it works

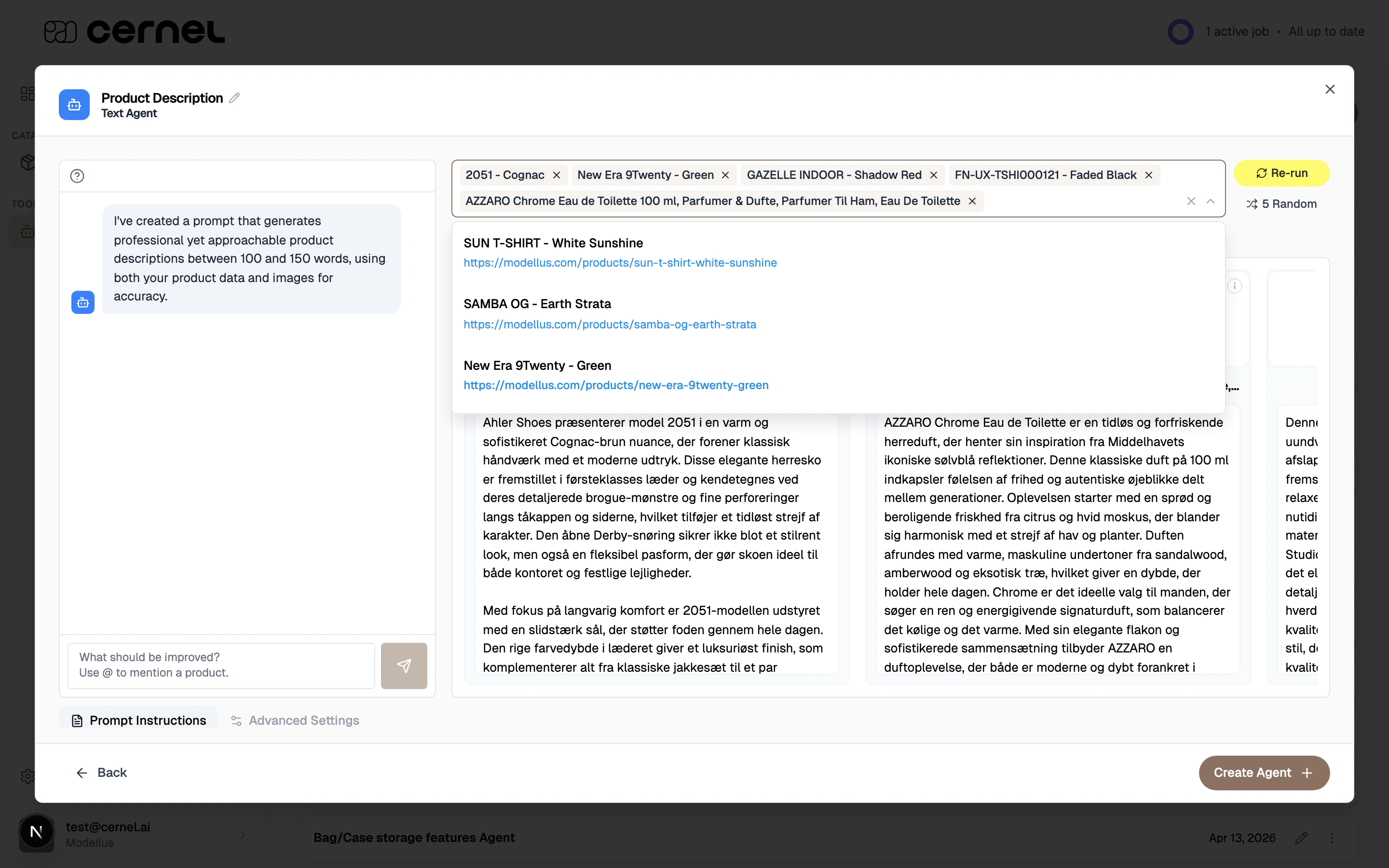

The sandbox lives inside the agent wizard’s final step, both when you create a new agent and when you edit an existing one. You pick a handful of real products from the current category, click Run, and the agent generates values for each one in front of you. For every result you can see the generated value, the AI’s reasoning, and the source data it used. If the output isn’t quite right, you don’t rewrite the prompt by hand. You tell the sandbox’s agent chat what to change (for example, “make descriptions shorter” or “don’t mention competitor brands”) and the chat rewrites the prompt and re-runs the agent automatically. Each refinement creates a new version of the prompt that you can step through with undo and redo, so you can compare wordings and roll back any change that made things worse. When the sandbox is showing output you’re happy with, save the agent. The current prompt is committed, and the agent is ready to be linked to attributes and run through enrichment at scale.Why you would use this

Catch problems before they hit your catalog

See the agent’s output on real products first. Fix tone, structure, and accuracy on a handful of samples instead of discovering issues across thousands of enriched products.

Iterate in chat, not in the prompt editor

Tell the chat what to change in plain language. It rewrites the prompt and re-runs the agent for you, faster than hand-editing a long prompt and guessing at phrasing.

Compare versions with undo and redo

Step back through prompt versions to compare outputs. If a change made things worse, one click takes you back.

Use real products, not synthetic tests

The sandbox runs against your own product data, so what you see is what enrichment will actually produce.

Key concept: Agent chat. The chat panel on the left of the sandbox doesn’t just comment on the output. It rewrites the agent’s prompt based on what you ask, saves the new version in history, and re-runs the agent on your selected products.

Step-by-step guide

Open the sandbox

Create a new agent or edit an existing one. In the wizard, step through to the final step: Review & Test. The sandbox opens automatically with a small random sample of products selected for you.

Choose products to test

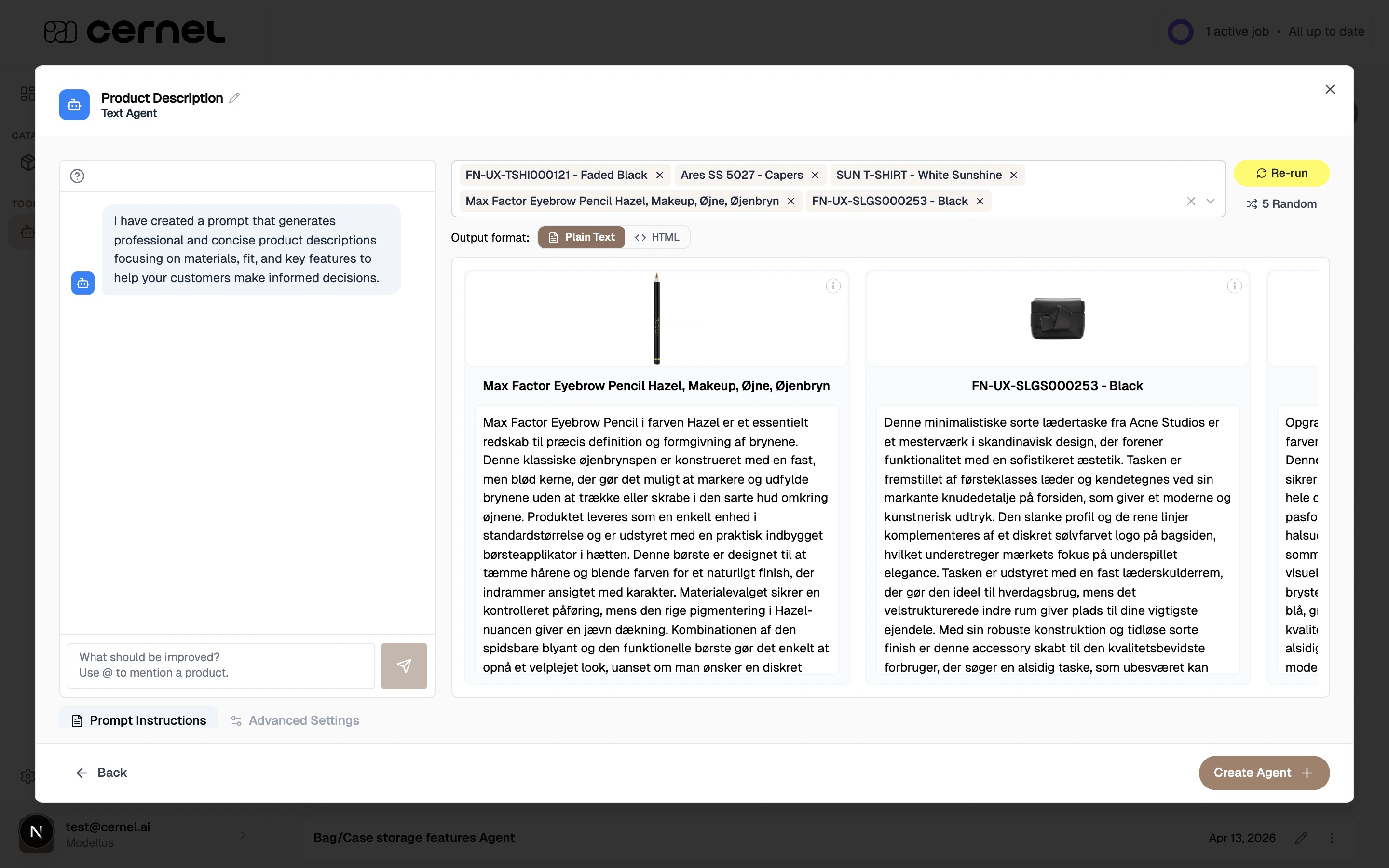

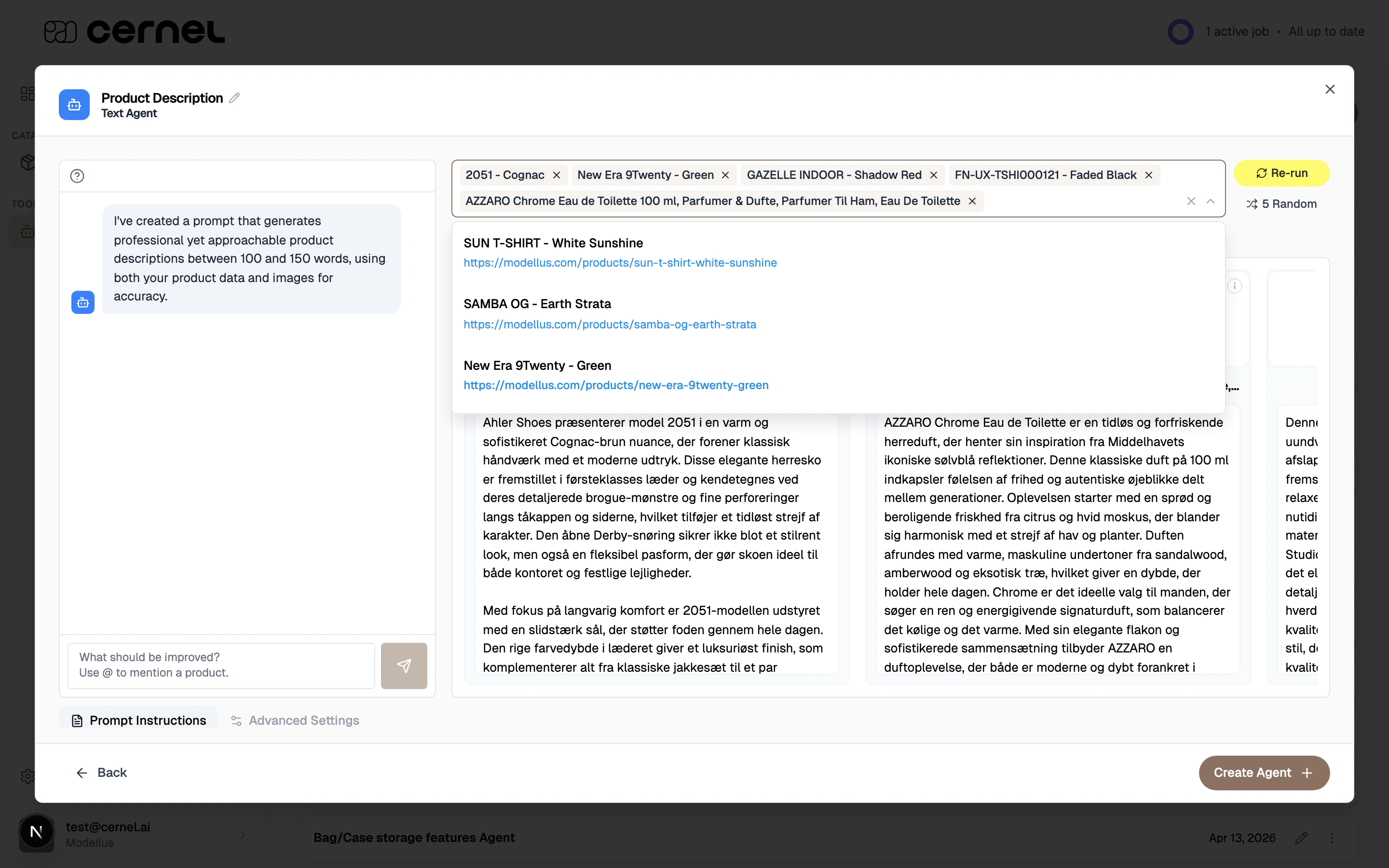

Use the product picker to set which products the agent should run against. You can:

- Search and pick manually: type part of a product name, click results to add them

- Click 5 Random: let Cernel pick 5 random products from the current category, preferring ones with images

Run the agent

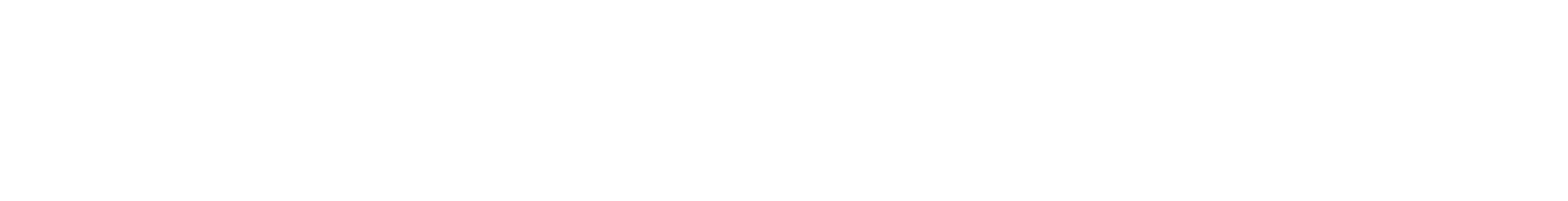

Click Run. The sandbox works through each product: analyzing configuration, processing products, running agent logic, and generating results. Each result shows the product’s name and image alongside the generated value for the agent’s attribute.On the next run, the button becomes Re-run, so you can generate fresh output on the same selection as you iterate.

Review the results

Scan the generated values. For any result you can:

- Hover the value to see the AI Reasoning, a breakdown of how the agent arrived at the output

- Click View details (info icon on the product card) to open the detail drawer, which shows the compiled Prompt the model received, the Source Data (product attributes used as context), and the Result in full

Refine through chat

If the output needs work, use the agent chat on the left. Type what you want to change. For example:

- “Make the descriptions shorter, aim for 80 to 100 words.”

- “Start every description with the material, then move to fit.”

- “Don’t mention competitor brands.”

Step through versions with undo and redo

Every refinement creates a new version of the prompt. Use the Undo and Redo buttons at the top of the chat panel to move between versions. Each step takes you one change back or forward, so you can compare output side by side and roll back any change that didn’t land.

Save the agent

When the output is where you want it, save the agent. The current prompt (the latest version in history) is committed and linked to the agent. Your sandbox selection and chat history are cleared; the agent is now ready to be linked to attributes and run through enrichment.

The agent is saved with the prompt you tested. Running enrichment with this agent will produce output similar to what you just saw in the sandbox.

Advanced configuration

Referencing a specific product in chat

Referencing a specific product in chat

The agent chat supports

@ mentions. Typing @ opens a list of the products in your current sandbox run. Click one to insert it as a pill into your message. When the chat rewrites the prompt, it uses that product’s generated output, reasoning, and images as grounded feedback, not just the text you typed.Use this when one product is off but the rest look fine. Instead of rewording the entire prompt, point the chat at the outlier and describe what’s wrong with it specifically.Inspecting what the model actually saw

Inspecting what the model actually saw

The detail drawer (View details on any result) shows three tabs:

- Prompt: the compiled prompt that was sent to the model for that product, with Data Source references and product fields already filled in. If the output is surprising, check the compiled prompt first to see whether the right context made it in.

- Source Data: the product attributes the agent used as context, including the product name, images, and any referenced fields

- Result: the generated value on its own, rendered the way the product table will render it

Switching attribute type mid-iteration

Switching attribute type mid-iteration

If you change the agent’s attribute type during the wizard (for example, from Text to HTML, or from Single Select to Multi Select), the sandbox automatically asks the chat to adapt the prompt for the new type. This keeps the instructions aligned with what the new type expects, so you don’t have to rewrite them from scratch.After the prompt is adapted, re-run the sandbox to see output in the new format.

What gets saved and what doesn't

What gets saved and what doesn't

- Saved with the agent: the prompt as it stands at the moment you save (the current version in history), plus any configuration changes made in earlier wizard steps

- Not saved: the list of sandbox products you tested on, individual sandbox results, and the agent chat transcript

Frequently asked questions

Why is my sandbox output weaker than what I expected from enrichment?

Why is my sandbox output weaker than what I expected from enrichment?

Two common causes:

- The agent has dependencies that haven’t been filled in on the test products. The sandbox doesn’t run upstream agents first. If your agent reads other attribute values, pick test products that already have those values.

- The selected products don’t represent your catalog well. Try clicking 5 Random again, or hand-pick a mix of product types and price points. Outputs can vary a lot between, say, a simple t-shirt and a technical running shoe.

Can I pick more than 10 products?

Can I pick more than 10 products?

No. The sandbox runs on up to 10 products per run by design, so refinement stays fast. Ten is typically enough to spot patterns in the output. Once you’re happy with the agent, run enrichment to generate content across the rest of the catalog.

Can I jump back to a specific version in the history?

Can I jump back to a specific version in the history?

Not directly. Undo and redo move one step at a time through the prompt’s history, so you can compare consecutive versions. If the current direction isn’t working, undo back to the version you liked and start a new refinement from there.

Does the agent chat call the LLM every time I hit send?

Does the agent chat call the LLM every time I hit send?

Yes. Each message generates a new prompt and re-runs the sandbox on your selected products, so you get fresh output for the new version. Keep chat messages focused: one clear change per message is easier to verify than a long instruction that rewrites several things at once.

What happens to my chat when I close the wizard?

What happens to my chat when I close the wizard?

The agent chat transcript isn’t stored. The prompt version you saved with the agent is kept, but the conversation that produced it is discarded. If you reopen the agent, the sandbox starts fresh.

Can I test without running the sandbox first?

Can I test without running the sandbox first?

Technically yes. You can send chat messages before the sandbox has run, and the chat will still refine the prompt. But the refinements aren’t grounded in any output, so the results are less predictable. The recommended flow is: run the sandbox, read the output, then refine.

What’s next

AI Agents

Learn the full agent setup: prompts, Data Sources, dependencies, and linking agents to categories.

Your First Enrichment

Take a sandbox-tested agent and run it across a full category.

Attributes

Understand the attribute types the sandbox tests against, and how they’re configured on categories.

Data Sources

Plug brand guidelines and context libraries into prompts so sandbox output lines up with your brand.